🏷️ Fine-tune a sentiment classifier with your own data#

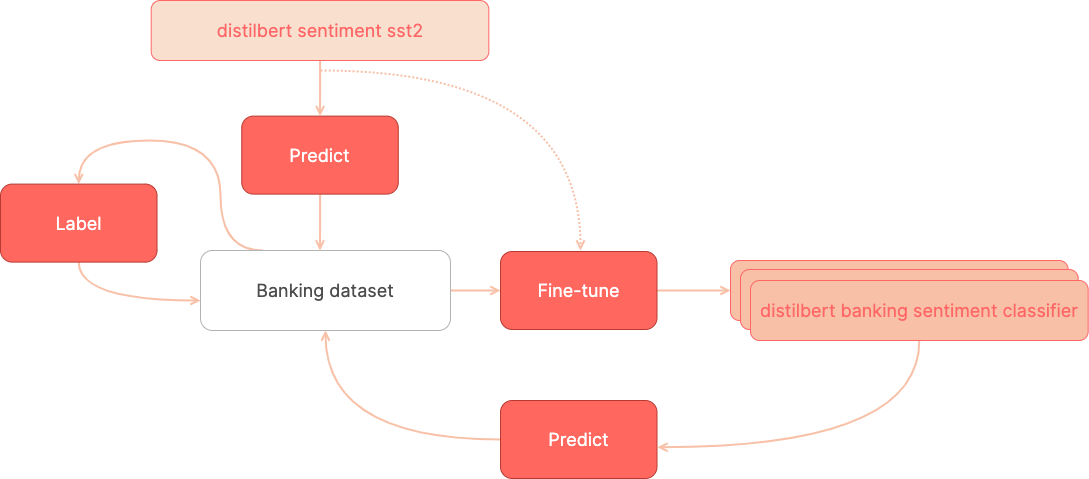

In this tutorial, we’ll build a sentiment classifier for user requests in the banking domain as follows:

🏁 Start with the most popular sentiment classifier on the Hugging Face Hub (almost 4 million monthly downloads as of December 2021) which has been fine-tuned on the SST2 sentiment dataset.

🏷️ Label a training dataset with banking user requests starting with the pre-trained sentiment classifier predictions.

⚙️ Fine-tune the pre-trained classifier with your training dataset.

🏷️ Label more data by correcting the predictions of the fine-tuned model.

⚙️ Fine-tune the pre-trained classifier with the extended training dataset.

Introduction#

This tutorial will show you how to fine-tune a sentiment classifier for your own domain, starting with no labeled data.

Most online tutorials about fine-tuning models assume you already have a training dataset. You’ll find many tutorials for fine-tuning a pre-trained model with widely used datasets, such as IMDB for sentiment analysis.

However, very often what you want is to fine-tune a model for your use case. It’s well-known that NLP model performance usually degrades with “out-of-domain” data. For example, a sentiment classifier pre-trained on movie reviews (e.g., IMDB) will not perform very well with customer requests.

This is an overview of the workflow we’ll be following:

Let’s get started!

Running Argilla#

For this tutorial, you will need to have an Argilla server running. There are two main options for deploying and running Argilla:

Deploy Argilla on Hugging Face Spaces: If you want to run tutorials with external notebooks (e.g., Google Colab) and you have an account on Hugging Face, you can deploy Argilla on Spaces with a few clicks:

For details about configuring your deployment, check the official Hugging Face Hub guide.

Launch Argilla using Argilla’s quickstart Docker image: This is the recommended option if you want Argilla running on your local machine. Note that this option will only let you run the tutorial locally and not with an external notebook service.

For more information on deployment options, please check the Deployment section of the documentation.

Tip

This tutorial is a Jupyter Notebook. There are two options to run it:

Use the Open in Colab button at the top of this page. This option allows you to run the notebook directly on Google Colab. Don’t forget to change the runtime type to GPU for faster model training and inference.

Download the .ipynb file by clicking on the View source link at the top of the page. This option allows you to download the notebook and run it on your local machine or on a Jupyter Notebook tool of your choice.

[ ]:

%pip install argilla "transformers[torch]" datasets sklearn ipywidgets -qqq

Let’s import the Argilla module for reading and writing data:

[ ]:

import argilla as rg

If you are running Argilla using the Docker quickstart image or Hugging Face Spaces, you need to init the Argilla client with the URL and API_KEY:

[ ]:

# Replace api_url with the url to your HF Spaces URL if using Spaces

# Replace api_key if you configured a custom API key

# Replace workspace with the name of your workspace

rg.init(

api_url="http://localhost:6900",

api_key="owner.apikey",

workspace="admin"

)

If you’re running a private Hugging Face Space, you will also need to set the HF_TOKEN as follows:

[ ]:

# # Set the HF_TOKEN environment variable

# import os

# os.environ['HF_TOKEN'] = "your-hf-token"

# # Replace api_url with the url to your HF Spaces URL

# # Replace api_key if you configured a custom API key

# # Replace workspace with the name of your workspace

# rg.init(

# api_url="https://[your-owner-name]-[your_space_name].hf.space",

# api_key="owner.apikey",

# workspace="admin",

# extra_headers={"Authorization": f"Bearer {os.environ['HF_TOKEN']}"},

# )

Finally, let’s include the imports we need:

[ ]:

import numpy as np

from datasets import load_dataset, load_metric, concatenate_datasets

from transformers import pipeline, AutoTokenizer, AutoModelForSequenceClassification

from transformers import TrainingArguments, Trainer

Enable Telemetry#

We gain valuable insights from how you interact with our tutorials. To improve ourselves in offering you the most suitable content, using the following lines of code will help us understand that this tutorial is serving you effectively. Though this is entirely anonymous, you can choose to skip this step if you prefer. For more info, please check out the Telemetry page.

[ ]:

try:

from argilla.utils.telemetry import tutorial_running

tutorial_running()

except ImportError:

print("Telemetry is introduced in Argilla 1.20.0 and not found in the current installation. Skipping telemetry.")

Preliminaries#

For building our fine-tuned classifier we’ll be using two main resources, both available in the 🤗 Hub :

A dataset in the banking domain: banking77

A pre-trained sentiment classifier: distilbert-base-uncased-finetuned-sst-2-english

Dataset: Banking 77#

This dataset contains online banking user queries annotated with their corresponding intents.

In our case, we’ll label the sentiment of these queries. This might be useful for digital assistants and customer service analytics.

Let’s load the dataset directly from the hub and split the dataset into two 50% subsets. We’ll start with the to_label1 split for data exploration and annotation, and keep to_label2 for further iterations.

[ ]:

banking_ds = load_dataset("PolyAI/banking77")

to_label1, to_label2 = (

banking_ds["train"].train_test_split(test_size=0.5, seed=42).values()

)

Model: sentiment distilbert fine-tuned on sst-2#

As of December 2021, the distilbert-base-uncased-finetuned-sst-2-english is in the top five of the most popular text-classification models in the Hugging Face Hub.

This model is a distilbert model fine-tuned on SST-2 (Stanford Sentiment Treebank), a highly popular sentiment classification benchmark.

As we will see later, this is a general-purpose sentiment classifier, which will need further fine-tuning for specific use cases and styles of text. In our case, we’ll explore its quality on banking user queries and build a training set for adapting it to this domain.

Let’s load the model and test it with an example from our dataset:

[3]:

sentiment_classifier = pipeline(

model="distilbert-base-uncased-finetuned-sst-2-english",

task="sentiment-analysis",

top_k=None,

)

to_label1[3]["text"], sentiment_classifier(to_label1[3]["text"])

[3]:

('Hi, Last week I have contacted the seller for a refund as directed by you, but i have not received the money yet. Please look into this issue with seller and help me in getting the refund.',

[[{'label': 'NEGATIVE', 'score': 0.9934700727462769},

{'label': 'POSITIVE', 'score': 0.0065299225971102715}]])

The model assigns more probability to the NEGATIVE class. Following our annotation policy (read more below), we’ll label examples like this as POSITIVE as they are general questions, not related to issues or problems with the banking application. The ultimate goal will be to fine-tune the model to predict POSITIVE for these cases.

A note on sentiment analysis and data annotation#

Sentiment analysis is one of the most subjective tasks in NLP. What we understand by sentiment will vary from one application to another and depend on the business objectives of the project. Also, sentiment can be modeled in different ways, leading to different labeling schemes. For example, sentiment can be modeled as real value (going from -1 to 1, from 0 to 1.0, etc.) or with 2 or more labels (including different degrees such as positive, negative, neutral, etc.)

For this tutorial, we’ll use the original labeling scheme defined by the pre-trained model which is composed of two labels: POSITIVE and NEGATIVE. We could have added the NEUTRAL label, but let’s keep it simple.

Another important issue when approaching a data annotation project is the annotation guidelines, which explain how to assign the labels to specific examples. As we’ll see later, the messages we’ll be labeling are mostly questions with a neutral sentiment, which we’ll label with the POSITIVE label, and some others are negative questions which we’ll label with the NEGATIVE label. Later on, we’ll show some examples of each label.

1. Run the pre-trained model over the dataset and log the predictions#

As a first step, let’s use the pre-trained model for predicting over our raw dataset. For this, we will use the handy dataset.map method from the datasets library.

The following steps could be simplified by using the auto-monitor support for Hugging Face pipelines. You can find more details in the Monitoring guide.

Predict#

[ ]:

def predict(examples):

return {"predictions": sentiment_classifier(examples["text"], truncation=True)}

# Add .select(range(10)) before map if you just want to test this quickly with 10 examples

to_label1 = to_label1.map(predict, batched=True, batch_size=4)

Note

If you don’t want to run the predictions yourself, you can also load the records with the predictions directly from the Hugging Face Hub: load_dataset("argilla/sentiment-banking", split="train"), see below for more details.

Build and log the dataset#

The following code builds a list of Argilla records with the predictions and logs the records to Argilla to label our first training set.

[ ]:

records = []

for example in to_label1.shuffle():

record = rg.TextClassificationRecord(

text=example["text"],

metadata={

"category": example["label"]

}, # Log the intents for exploration of specific intents

prediction=[(pred["label"], pred["score"]) for pred in example["predictions"]],

prediction_agent="distilbert-base-uncased-finetuned-sst-2-english",

)

records.append(record)

rg.log(name="labeling_with_pretrained", records=records)

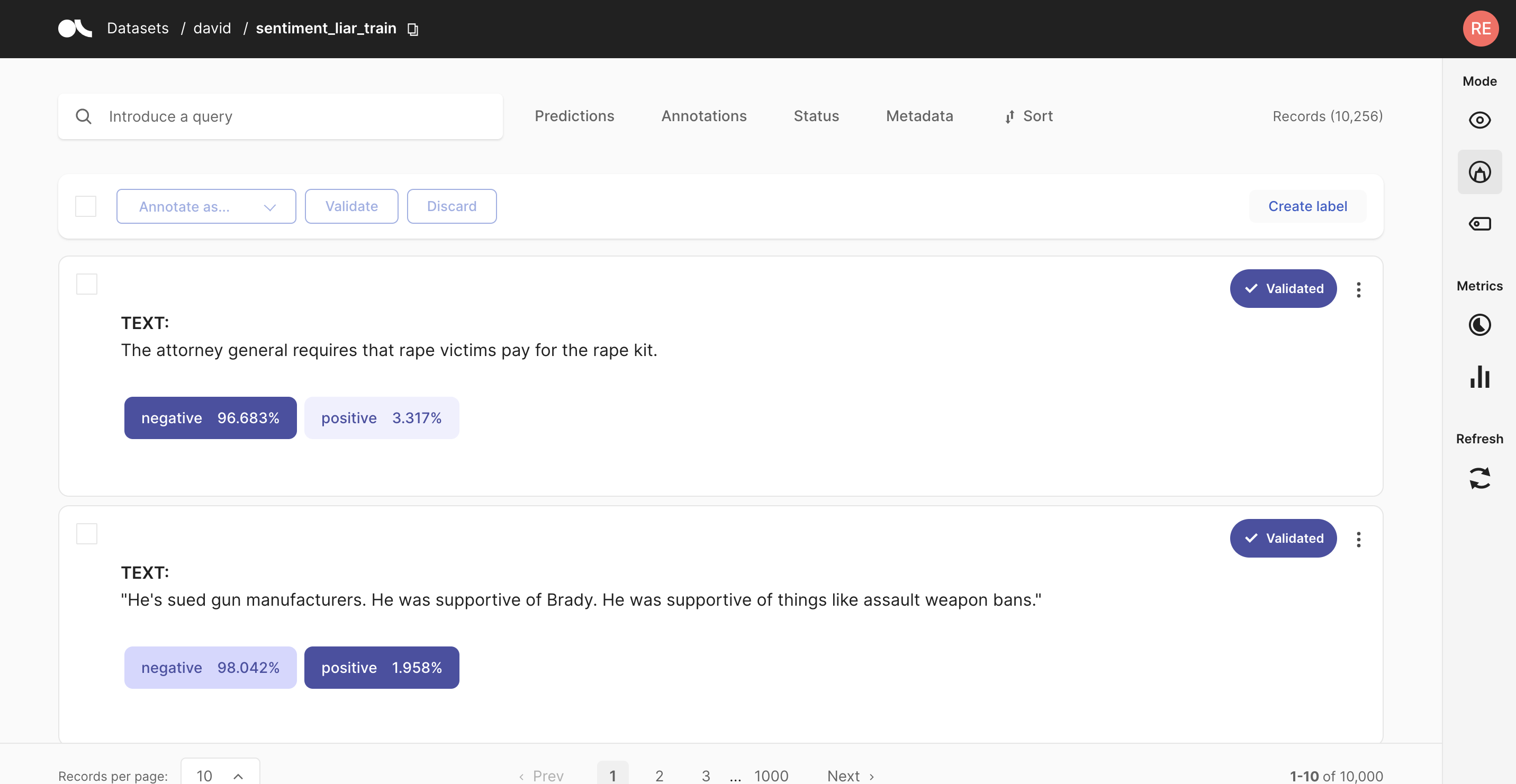

2. Explore and label data with the pre-trained model#

In this step, we’ll start by exploring how the pre-trained model is performing with our dataset.

At first sight:

The pre-trained sentiment classifier tends to label most of the examples as

NEGATIVE(4.835 of 5.001 records). You can see this yourself using thePredictions / Predicted as:filterUsing this filter and filtering by predicted as

POSITIVE, we see that examples like “I didn’t withdraw the amount of cash that is showing up in the app.” are not predicted as expected (according to our basic “annotation policy” described in the preliminaries).

Taking into account this analysis, we can start labeling our data.

Argilla provides you with a search-driven UI to annotate data, using free-text search, search filters and the Elasticsearch query DSL for advanced queries. This is especially useful for sparse datasets, tasks with a high number of labels, or unbalanced classes. In the standard case, we recommend you to follow the workflow below:

Start labeling examples sequentially, without using search features. This way you will annotate a fraction of your data which will be aligned with the dataset distribution.

Once you have a sense of the data, you can start using filters and search features to annotate examples with specific labels. In our case, we’ll label examples predicted as

POSITIVEby our pre-trained model, and then a few examples predicted asNEGATIVE.

After some minutes, we’ve labelled almost 5% of our raw dataset with more than 200 annotated examples, which is a small dataset but should be enough for a first fine-tuning of our banking sentiment classifier:

3. Fine-tune the pre-trained model#

In this step, we’ll load our training set from Argilla and fine-tune using the Trainer API from Hugging Face transformers. For this, we closely follow the guide Fine-tuning a pre-trained model from the transformers docs.

First, let’s load the annotations from our dataset using the query parameter from the load method. The Validated status corresponds to annotated records.

[47]:

rb_dataset = rg.load(name="labeling_with_pretrained", query="status:Validated")

rb_dataset.to_pandas().head(3)

[47]:

| inputs | prediction | prediction_agent | annotation | annotation_agent | multi_label | explanation | id | metadata | status | event_timestamp | metrics | search_keywords | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | {'text': 'I would like to cancel a purchase.'} | [(NEGATIVE, 0.9997695088386536), (POSITIVE, 0.... | distilbert-base-uncased-finetuned-sst-2-english | POSITIVE | argilla | False | None | 0002cbd9-b687-462a-bbd2-3130f4c88d8d | {'category': 52} | Validated | None | None | None |

| 1 | {'text': 'What's up with the extra fee I got?'} | [(NEGATIVE, 0.9968097805976868), (POSITIVE, 0.... | distilbert-base-uncased-finetuned-sst-2-english | NEGATIVE | argilla | False | None | 0009f445-4844-4ccd-9ea8-207a1fb0e239 | {'category': 19} | Validated | None | None | None |

| 2 | {'text': 'Do you have an age requirement when ... | [(NEGATIVE, 0.9825802445411682), (POSITIVE, 0.... | distilbert-base-uncased-finetuned-sst-2-english | POSITIVE | argilla | False | None | 0012e385-643c-4660-ad66-5b4339bb3999 | {'category': 1} | Validated | None | None | None |

Prepare training and test datasets#

Let’s now prepare our dataset for training and testing our sentiment classifier, using the datasets library:

[ ]:

# Create 🤗 dataset with labels as numeric ids

train_ds = rb_dataset.prepare_for_training()

# Tokenize our datasets

tokenizer = AutoTokenizer.from_pretrained(

"distilbert-base-uncased-finetuned-sst-2-english"

)

def tokenize_function(examples):

return tokenizer(examples["text"], padding="max_length", truncation=True)

tokenized_train_ds = train_ds.map(tokenize_function, batched=True)

# Split the data into a training and evaluation set

train_dataset, eval_dataset = tokenized_train_ds.train_test_split(

test_size=0.2, seed=42

).values()

Train our sentiment classifier#

As we mentioned before, we’re going to fine-tune the distilbert-base-uncased-finetuned-sst-2-english model. Another option will be fine-tuning a distilbert masked language model from scratch, but we leave this experiment to you.

Let’s load the model and configure the trainer:

[ ]:

model = AutoModelForSequenceClassification.from_pretrained(

"distilbert-base-uncased-finetuned-sst-2-english"

)

training_args = TrainingArguments(

"distilbert-base-uncased-sentiment-banking",

evaluation_strategy="epoch",

logging_steps=30,

)

metric = load_metric("accuracy")

def compute_metrics(eval_pred):

logits, labels = eval_pred

predictions = np.argmax(logits, axis=-1)

return metric.compute(predictions=predictions, references=labels)

trainer = Trainer(

args=training_args,

model=model,

train_dataset=train_dataset,

eval_dataset=eval_dataset,

compute_metrics=compute_metrics,

)

And finally, we can train our first model!

[ ]:

trainer.train()

4. Testing the fine-tuned model#

In this step, let’s first test the model we have just trained.

Let’s create a new pipeline with our model:

[ ]:

finetuned_sentiment_classifier = pipeline(

model=model.to("cpu"),

tokenizer=tokenizer,

task="sentiment-analysis",

return_all_scores=True,

)

Then, we can compare its predictions with the pre-trained model and an example:

[ ]:

finetuned_sentiment_classifier(

"I need to deposit my virtual card, how do i do that."

), sentiment_classifier("I need to deposit my virtual card, how do i do that.")

As you can see, our fine-tuned model now classifies this general questions (not related to issues or problems) as POSITIVE, while the pre-trained model still classifies this as NEGATIVE.

Let’s check now an example related to an issue where both models work as expected:

[ ]:

finetuned_sentiment_classifier(

"Why is my payment still pending?"

), sentiment_classifier("Why is my payment still pending?")

5. Run our fine-tuned model over the dataset and log the predictions#

Let’s now create a dataset from the remaining records (those which we haven’t annotated in the first annotation session).

We’ll do this using the Default status, which means the record hasn’t been assigned a label. From here, this is basically the same as step 1, in this case using our fine-tuned model. Let’s take advantage of the datasets map feature, to make batched predictions. Afterward, we can convert the dataset directly to Argilla records again and log them to the web app.

[ ]:

rb_dataset = rg.load(name="labeling_with_pretrained", query="status:Default")

def predict(examples):

texts = [example["text"] for example in examples["inputs"]]

return {

"prediction": finetuned_sentiment_classifier(texts),

"prediction_agent": ["distilbert-base-uncased-banking77-sentiment"]

* len(texts),

}

ds_dataset = rb_dataset.to_datasets().map(predict, batched=True, batch_size=8)

records = rg.read_datasets(ds_dataset, task="TextClassification")

rg.log(records=records, name="labeling_with_finetuned")

6. Explore and label data with the fine-tuned model#

In this step, we’ll start by exploring how the fine-tuned model is performing with our dataset.

At first sight, using the predicted as filter by POSITIVE and then by NEGATIVE, we can observe that the fine-tuned model predictions are more aligned with our “annotation policy”.

Now that the model is performing better for our use case, we’ll extend our training set with highly informative examples. A typical workflow for doing this is as follows:

Use the prediction score filter for labeling uncertain examples.

Label examples predicted by our fine-tuned model as

POSITIVEand then predicted asNEGATIVEto correct the predictions.

After spending some minutes, we labelled almost 2% of our raw dataset with around 80 annotated examples, which is a small dataset but hopefully with highly informative examples.

7. Fine-tuning with the extended training dataset#

In this step, we’ll add the new examples to our training set and fine-tune a new version of our banking sentiment classifier.

Adding labeled examples to our previous training set#

Let’s add our new examples to our previous training set.

[ ]:

rb_dataset = rg.load("labeling_with_finetuned")

train_ds = rb_dataset.prepare_for_training()

tokenized_train_ds = train_ds.map(tokenize_function, batched=True)

train_dataset = concatenate_datasets([train_dataset, tokenized_train_ds])

Training our sentiment classifier#

As we want to measure the effect of adding examples to our training set we will:

Fine-tune from the pre-trained sentiment weights (as we did before)

Use the previous test set and the extended train set (obtaining a metric we use to compare this new version with our previous model)

[ ]:

model = AutoModelForSequenceClassification.from_pretrained(

"distilbert-base-uncased-finetuned-sst-2-english"

)

train_ds = train_dataset.shuffle(seed=42)

trainer = Trainer(

args=training_args,

model=model,

train_dataset=train_dataset,

eval_dataset=eval_dataset,

compute_metrics=compute_metrics,

)

trainer.train()

model.save_pretrained("distilbert-base-uncased-sentiment-banking")

Summary#

In this tutorial, you learned how to build a training set from scratch with the help of a pre-trained model, performing two iterations of predict > log > label.

Although this is somehow a toy example, you will be able to apply this workflow to your own projects to adapt existing models or build them from scratch.

In this tutorial, we’ve covered one way of building training sets: hand labeling. If you are interested in other methods, which could be combined with hand labeling, checkout the following: